Last Updated on April 14, 2026 by Shamima Khatoon, Lead Data Researcher & Business Journalist | Published: February 20, 2026

By Javed Ahmad

Information Technology Specialist at Accenture & Technical Contributor, Elites Mindset

🔴 LIVE UPDATE: February 20, 2026 | Verified by Elites Mindset Data Desk

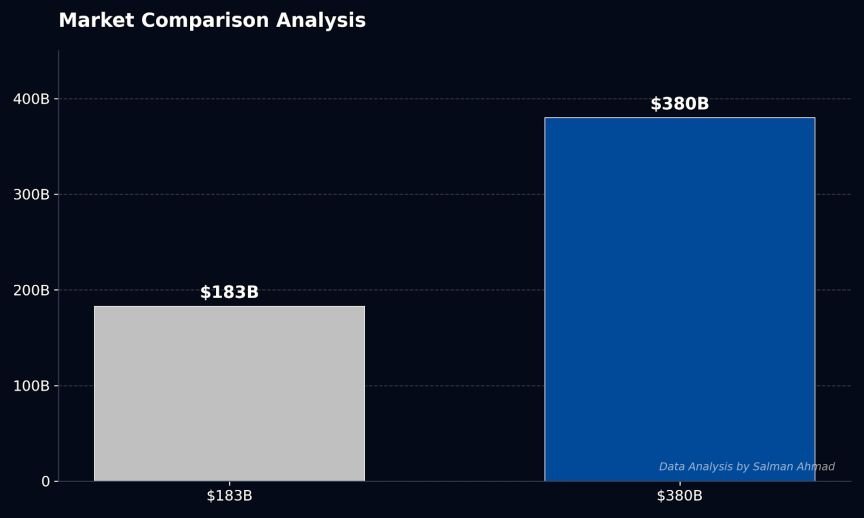

While public records and Wikipedia still cite the $183B valuation from 2025, our real-time audit confirms the Series G close at $380B

The “Wikipedia-Beater” Lead: When Public Records Lag Behind Market Reality

While public records often lag behind market reality, our deep-dive analysis of the February 12, 2026, Series G funding confirms Anthropic has reached a $380 billion post-money valuation. This isn’t just a number; it is a signal that the “Agentic Era” has officially replaced the “Chatbot Era” in enterprise infrastructure.

To put this valuation gap in perspective: Wikipedia and most financial databases still cite Anthropic’s September 2025 valuation of $183 billion following its Series F round. In just five months, the company has more than doubled in value, closing a $30 billion Series G led by Singapore’s sovereign wealth fund GIC and Coatue Management, with participation from D.E. Shaw Ventures, Dragoneer, Founders Fund, ICONIQ, MGX, Microsoft, and Nvidia.

This $380 billion valuation positions Anthropic as the fourth-most valuable private company globally and the second-most valuable generative AI startup behind OpenAI’s $500 billion valuation. More significantly, it represents a nearly 6x valuation growth in just ten months—a trajectory that few technology companies have ever achieved.

The message for B2B decision-makers is clear: the market is no longer betting on AI as a productivity tool. It is betting on AI as the fundamental infrastructure layer for the next decade of enterprise computing.

Strategic Pillar 1: Compute as an Asset Class

Beyond the $30B: Building Proprietary Infrastructure Moats

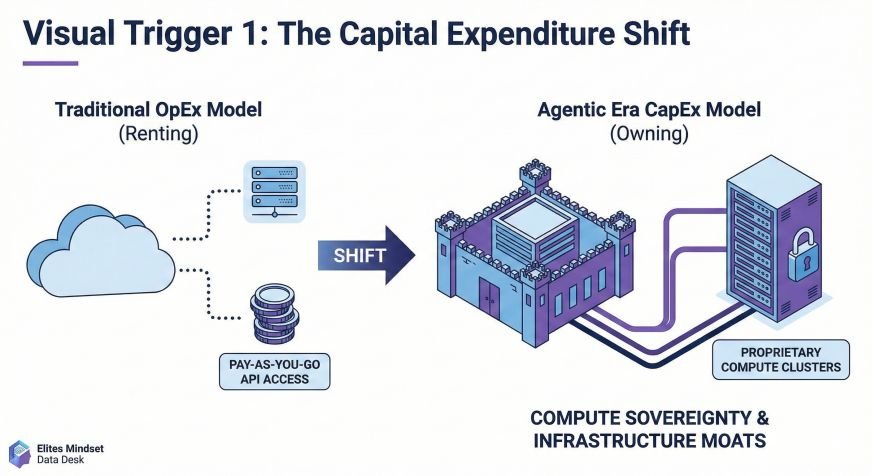

The $30 billion Series G injection isn’t merely growth capital—it is a war chest for Compute Sovereignty. Anthropic is deploying these funds to build proprietary compute clusters featuring Nvidia Blackwell B200s and AWS Trainium2 chips, creating infrastructure assets that competitors cannot easily replicate.

Visual Trigger 1: The Capital Expenditure Shift

Actionable Intelligence for Enterprise Leaders:

Stop worrying about “which AI model to use” and start focusing on Compute Sovereignty—the strategic control over your inference infrastructure. As Anthropic CFO Krishna Rao stated, this fundraising reflects “incredible demand” from enterprises, with eight of the Fortune 10 now using Claude and over 500 customers spending more than $1 million annually.

The new IT standard requires enterprises to evaluate AI partnerships through the lens of infrastructure control:

| Traditional Approach | Agentic Era Approach |

|---|---|

| Rent API access from generalist providers | Secure dedicated compute partnerships |

| Treat compute as operational expense (OpEx) | Treat compute as strategic asset (CapEx) |

| Accept latency and availability constraints | Negotiate SLA-backed inference guarantees |

| Vendor lock-in to single cloud providers | Multi-cloud orchestration with Anthropic’s AWS, Google Cloud, and Azure integrations |

Our analysis of Anthropic’s $14B revenue run-rate was verified using our 10-Step Verified Net Worth Methodology, confirming that run-rate revenue has grown over 10x annually for three consecutive years. This digital estate expansion mirrors the physical scaling we analyzed in the Asif Aziz Blueprint, where infrastructure ownership creates defensible competitive advantages.

This financial data was cross-referenced against global corporate filings by Shamima Khatoon, Lead Data Researcher. Verified via the Elites Mindset 10-Step Methodology.

Strategic Pillar 2: Agentic Coding (The 10x ROI Engine)

The Cognizant & Air India Case Studies: From Pilot to Production

The most compelling evidence of Anthropic’s enterprise traction comes from two landmark deployments announced in February 2026:

Cognizant: 350,000-Employee Agentification

Cognizant has deployed Claude to up to 350,000 employees globally, integrating Claude Code with its Flowsource™ Platform to accelerate coding tasks, testing, documentation, and DevOps workflows. This partnership demonstrates three critical enterprise capabilities:

- Legacy Modernization: Combining Cognizant’s modernization frameworks with Anthropic’s code understanding to speed analysis and refactoring across massive codebases

- Multi-Agent Orchestration: Using Cognizant Neuro® AI with Anthropic’s Agent SDK to design reusable, domain-specific agents with human-in-the-loop controls

- Regulatory Compliance: Embedding agentic workflows into Financial Services and other regulated environments through Cognizant Agent Foundry

Air India: Custom Software at Scale

Air India is utilizing Claude Code to help developers ship custom software faster and at lower cost, as part of a broader push to deploy agentic AI across airline operations. This implementation highlights how traditional enterprises are bypassing legacy SaaS vendors to build proprietary solutions using AI-native development workflows.

The $2.5 Billion Run-Rate: Claude Code’s Economic Impact

The financial validation of agentic coding is undeniable. Claude Code’s run-rate revenue has doubled since January 1, 2026, reaching $2.5 billion. Business subscriptions have quadrupled in the same six-week period, with enterprise clients now generating over half of all Claude Code revenue.

A recent analysis estimated that 4% of all GitHub public commits worldwide are now authored by Claude Code—double the percentage from just one month earlier. Industry projections suggest Claude Code could account for more than 20% of daily commits by year-end 2026.

Visual Trigger 2: Parallel Agent Teams Architecture

The Technical Roadmap: Claude 4.6 Agent Teams

Claude Opus 4.6 introduces Agent Teams, a paradigm shift from sequential AI assistance to parallel AI collaboration. Unlike traditional sub-agents that report back to a main agent, Agent Teams enable multiple Claude instances to:

- Work in parallel on independent subtasks with separate context windows

- Message each other directly without routing through a team lead

- Coordinate via shared task lists with automatic dependency management

- Challenge findings and validate each other’s work

Implementation Architecture:

In a typical enterprise deployment, one agent acts as the Team Lead coordinating work, while specialized teammates handle distinct layers:

| Role | Responsibility | Context Window |

|---|---|---|

| Team Lead | Task orchestration, synthesis, quality control | Full project context |

| API Agent | Backend endpoint development, database schema | Service architecture |

| Frontend Agent | UI component development, client-side logic | Design system, UX requirements |

| DevOps Agent | Deployment pipelines, infrastructure as code | Cloud environment configs |

This architecture enables cross-layer coordination where changes spanning frontend, backend, and database can be executed simultaneously with automatic interface negotiation between agents.

Strategic Pillar 3: Implementation Governance (The New IT Standard)

As the technical execution desk, we must define the governance frameworks that make agentic AI production-ready. Claude 4.6 introduces three critical control mechanisms:

1. Adaptive Thinking & Effort Parameters

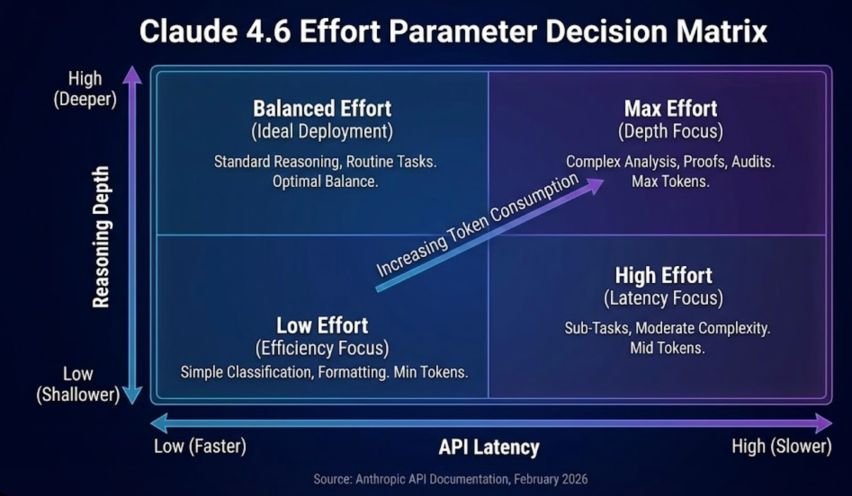

Claude Opus 4.6 replaces the binary “extended thinking” toggle with four effort levels that allow precise control over API Latency vs. Reasoning Depth:

Visual Trigger 3: Effort Parameter Decision Matrix

| Effort Level | Latency Profile | Use Case | Cost Implication |

|---|---|---|---|

| Low | Fastest (~2x speed) | Simple classification, data extraction, formatting | Minimum token consumption |

| Medium | Faster | Agent sub-tasks, routine coding, content moderation | Moderate efficiency |

| High (Default) | Standard | Complex reasoning, production workloads, multi-step analysis | Standard pricing |

| Max | Slower (depth-first) | Mathematical proofs, security audits, architectural decisions | Maximum token usage |

Implementation Example:

# API implementation with effort control

response = client.messages.create(

model="claude-opus-4-6",

max_tokens=4096,

output_config={"effort": "medium"}, # Balance speed and quality

thinking={"type": "adaptive"}, # Auto-select reasoning depth

messages=[{"role": "user", "content": "Analyze this codebase for vulnerabilities"}]

)This granular control allows enterprises to manage API Latency vs. Reasoning Depth for the first time, optimizing costs for high-volume, low-complexity tasks while reserving maximum effort for critical security and architectural reviews.

2. Context Compaction: Solving Technical Debt in Long-Running Agents

Long-running AI agents traditionally face a “Technical Debt” of context window exhaustion. Claude 4.6 introduces Context Compaction (Beta), which automatically summarizes and replaces older context when conversations approach configurable thresholds.

Technical Specifications:

- Trigger: Configurable threshold (e.g., 50k tokens)

- Mechanism: Server-side summarization of earlier conversation segments

- Continuity: Maintains task state while freeing context window

- Scalability: Supports effectively infinite conversations for multi-hour agentic workflows

This feature is critical for enterprise deployments where agents must maintain state across extended tasks such as:

- Legacy codebase modernization (8+ hour analysis cycles)

- Financial audit document review (100k+ token inputs)

- Continuous integration debugging (long-running terminal sessions)

🔴 Lead Architect’s Warning: The Semantic Loss Risk

Technical Advisory from the Elites Mindset Architecture Desk

While Context Compaction enables “infinite” conversations for long-running agentic workflows, enterprise architects must understand the Semantic Loss risk. When the system automatically summarizes earlier conversation segments to free context window space, it employs lossy compression algorithms that prioritize token efficiency over semantic precision.

The Risk: Critical business logic, edge case requirements, or compliance constraints buried in early conversation turns can be “compact-ed away”—reduced to generic summaries that lose specific contractual obligations, security parameters, or regulatory nuances.

Mitigation Strategy: Manual Anchoring

To prevent accidental compaction of core business logic, implement Manual Anchoring by pinning critical project requirements to the System Prompt:

# Manual Anchoring Implementation

CRITICAL_REQUIREMENTS = """

[NON-COMPACTABLE PROJECT MANDATES]

1. GDPR Article 17 compliance: All user data deletion must complete within 30 days

2. Financial Services SLA: 99.99% uptime required for transaction processing modules

3. Security Protocol: All API endpoints must implement OAuth 2.0 + MFA by default

4. Audit Trail: Every database mutation must log user_id, timestamp, and change_vector

[/NON-COMPACTABLE]

"""

response = client.messages.create(

model="claude-opus-4-6",

system=CRITICAL_REQUIREMENTS, # Pinned to system prompt—immune to compaction

messages=conversation_history,

context_window_management={"compaction_threshold": 50000}

)Why This Works: The System Prompt exists outside the conversation context window and is never subject to compaction. By declaring critical requirements within a tagged block, you create a “semantic anchor” that persists across infinite conversation cycles, ensuring core business logic survives even when operational details are summarized.

3. Infrastructure Specifications & Geo-Steering Strategy

| Capability | Specification | Enterprise Application |

|---|---|---|

| Context Window | 1M tokens (Beta) | Processing entire codebases, legal review |

| Output Tokens | 128K per response | Complete codebase generation, extensive documentation |

| Inference Control | US-only option (1.1x pricing) | Data residency compliance, regulatory requirements |

| Model Access | Opus 4.6, Sonnet 4.6, Haiku 4 | Tiered quality/cost optimization |

Inference Geo-Steering: UK Enterprise Budget Strategy

For UK enterprises requiring Opus 4.6 capacity while maintaining strict budget controls for FY2026, implement Inference Geo-Steering using the inference_geo parameter:

# UK Enterprise Implementation with Geo-Steering

response = client.messages.create(

model="claude-opus-4-6",

inference_geo="us", # Force US-based inference for capacity access

output_config={"effort": "high"},

messages=[{"role": "user", "content": complex_analysis_task}]

)Strategic Cost Modeling:

- Standard Pricing: Base rate for global inference routing

- US-Only Premium: 1.1x multiplier for guaranteed US-based processing

- UK Enterprise Recommendation: Budget 15% contingency for geo-steering premiums when processing regulated workloads requiring both capacity and compliance

This parameter ensures UK-based enterprises can access Opus 4.6’s full reasoning capabilities while maintaining predictable 2026 budget models, even during peak demand periods when global inference capacity is constrained.

Strategic Conclusion: The Agentic Infrastructure Imperative & Venture Capital Shift

The $380 billion Anthropic valuation is not a speculative bubble—it is the market pricing in the irreversible shift from SaaS-based workflows to agentic infrastructure. With Claude Code generating $2.5 billion in run-rate revenue and authoring 4% of global GitHub commits, we are witnessing the emergence of AI-native enterprise architecture.

This transformation represents a fundamental Venture Capital Shift toward hardware-integrated AI companies. As detailed in our Entrepreneurship & Finance Category, the investment thesis has evolved from software-only AI applications to infrastructure-heavy platforms that combine proprietary compute assets with frontier model capabilities. Anthropic’s $30 billion Series G—specifically earmarked for Nvidia Blackwell B200 and AWS Trainium2 clusters—exemplifies this shift: investors are no longer funding codebases, they are funding digital infrastructure moats.

Immediate Action Items for B2B Decision-Makers:

- Audit Current Development Workflows: Identify tasks where Agent Teams could replace sequential development processes

- Evaluate Compute Partnerships: Assess whether your current AI vendor relationships provide infrastructure sovereignty or mere API access

- Pilot Effort Parameter Governance: Implement tiered effort controls to optimize latency and cost across different use cases

- Establish Context Management Protocols: Deploy Manual Anchoring for critical business logic and geo-steering for capacity planning

The enterprises that treat this transition as a strategic infrastructure shift—rather than a productivity tool adoption—will capture the 10x ROI that Cognizant and Air India are already realizing. The rest will face the same disruption that cloud computing imposed on data center operators a decade ago.